In a recent client dispute, I found myself explaining something that felt completely obvious to me: their website had never gone down. We could see it live from multiple locations and devices, and we had never even touched their production server—we were working on a development copy. Yet to them, the site was “down,” and the conclusion was clear: we must have caused it. No amount of calm explanation fully bridged that gap. What felt like a simple technical misunderstanding revealed something deeper—how easily two people can look at the same situation and arrive at completely different “obvious” truths.

Truth, as we’ve discussed, is not what feels right or what fits our story. Truth is what corresponds to reality. The website was either up or down, independent of either of our opinions. But here’s the tension: even when reality is fixed, our access to it is not. We interpret, we infer, we fill in gaps. That’s why truth requires discipline. We aim at reality, but we must accept that our current understanding may still be incomplete.

Belief is where things begin to diverge. A belief is a claim about reality—but it is not reality itself. It requires justification. In this case, the client formed a belief based on their experience: “I can’t access my site, therefore it must be down, therefore they must have broken it.” It felt coherent from their perspective. But belief built on limited or misinterpreted evidence can still feel certain. That’s the danger—belief can feel like truth even when it isn’t.

Personal belief adds another layer. What we believe is shaped not just by evidence, but by the layers around us. Public belief—what is generally supported—was on our side: websites don’t go down globally because someone edits a development copy elsewhere. But tribal and worldview layers were also at play. Trust, past experiences with technology, and assumptions about “what companies do” all shape how someone interprets events. By the time a belief reaches this level, it’s no longer just about facts—it’s about identity and interpretation.

This is where confidence comes in. Confidence in a belief should not be absolute; it should be calibrated. In situations grounded in strong public evidence, confidence can be high—but still not immune to revision. In others, especially where interpretation and personal experience dominate, confidence should be held more loosely. Confidence comes in degrees. It rises with evidence, falls with counter-evidence, and benefits from humility. The moment we treat confidence as certainty, we stop learning—and we lose our ability to see where we might be wrong.

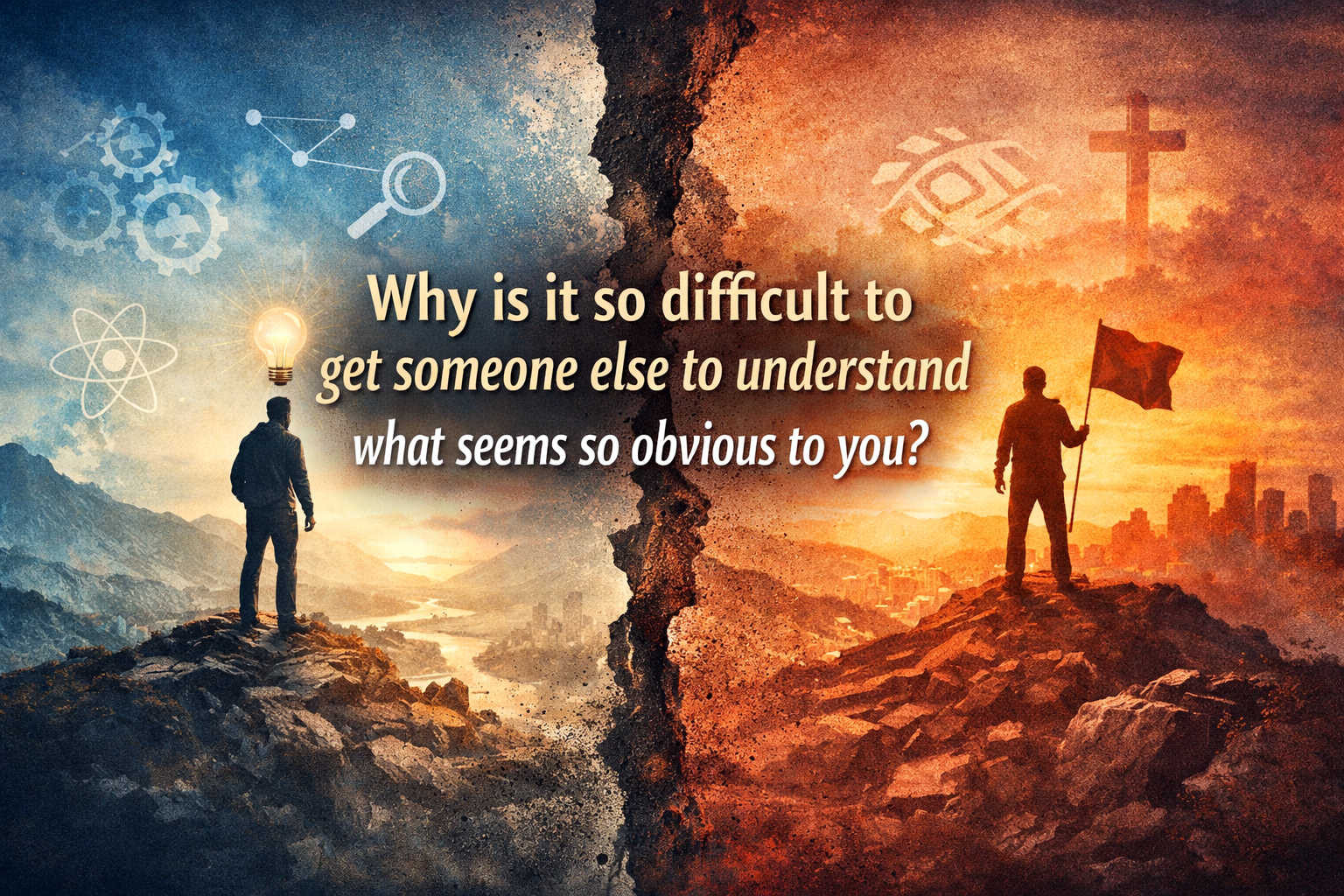

What feels obvious to you is built on layers—your evidence, your experiences, your trusted sources, and your worldview. Others may not share those layers. Their “obvious” rests on a different foundation. Confidence in belief isn’t just about being right; it’s about how well your belief is justified within a shared space of evidence. When that shared space is missing, clarity doesn’t transfer. That’s why persuasion has limits—and why recognizing degrees of confidence matters as much as the belief itself.